Tags:

Many of us have heard Dr. Eric Cole say in SEC401: SANS Security Essentials, “Prevention is ideal, but detection is a must.”

We can do everything right in our efforts to build high-quality, secure applications and use all the best firewalls and rulesets imaginable, yet some attacks are still getting through. The fact is, nothing is 100% secure. For this reason, it is important to have proper logging and detection in place so that your team can respond to potential incidents promptly.

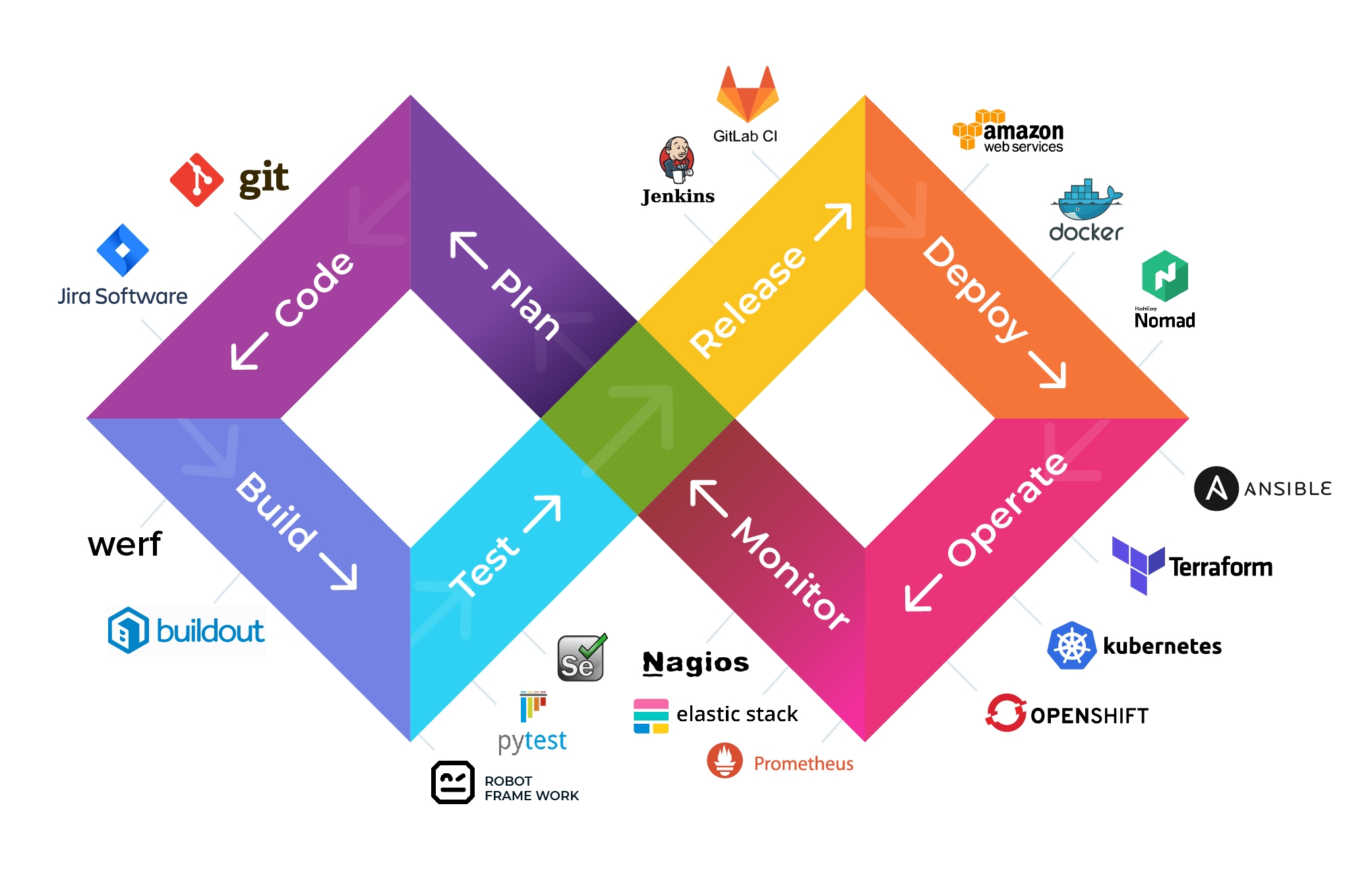

Robust logging should be carefully planned out and “baked-in” to applications and cloud infrastructure throughout all phases of the CI/CD, DevOps Lifecycle, or SDLC.

One of the best DevOps lifecycle + toolchains chart, courtesy of quintagroup.com

Each of the Big 3 Public Cloud Service Providers (AWS | Azure | GCP) offer various security and logging services to help you achieve your goals. All of these services should be used and enabled when possible. Many, if not all of these services can also be consumed by other commercial big-data analytics and Security Information and Event Management (SIEM) systems where additional analytics can be done and alerts can be created.

Here are just a few of the services that we should be using in our cloud environments to help ensure early detection and swift response.

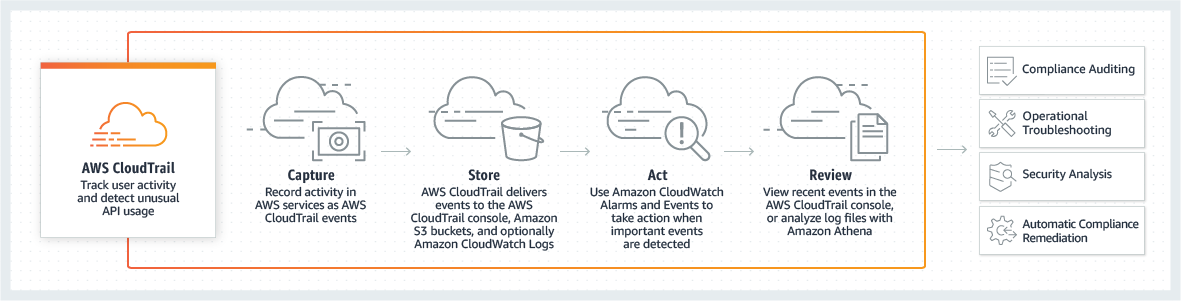

Cloud Management Plane Logging

Cloud Management Plane Logging services provide excellent visibility into what is taking place in and throughout the cloud environment. This is critically important in a traditional IT Infrastructure. However, it is even more critical now that the computing infrastructure and environments are accessed over the public internet. Cloud Management Plane Logging services help provide visibility into who or what is attempting to access a given service or resource; as well as, when it happened, where it came from, and how the service or resource responded to each of those attempts or requests.

Services like AWS’s CloudTrail, Azure’s Activity Logs and GCP’s Cloud Audit Logs directly support and even enrich Governance, Risk Management, and Compliance (GRC) efforts providing both operational and risk auditing capabilities. It’s all continuous... and the Detect, Respond and Recover components of the NIST Cybersecurity Framework can all be automated using other cloud services both within the cloud environment, as well as, in conjunction with many open source tools and commercial services.

These are one of the main logging services that are at the core of almost every other security and logging service supporting cloud infrastructures.

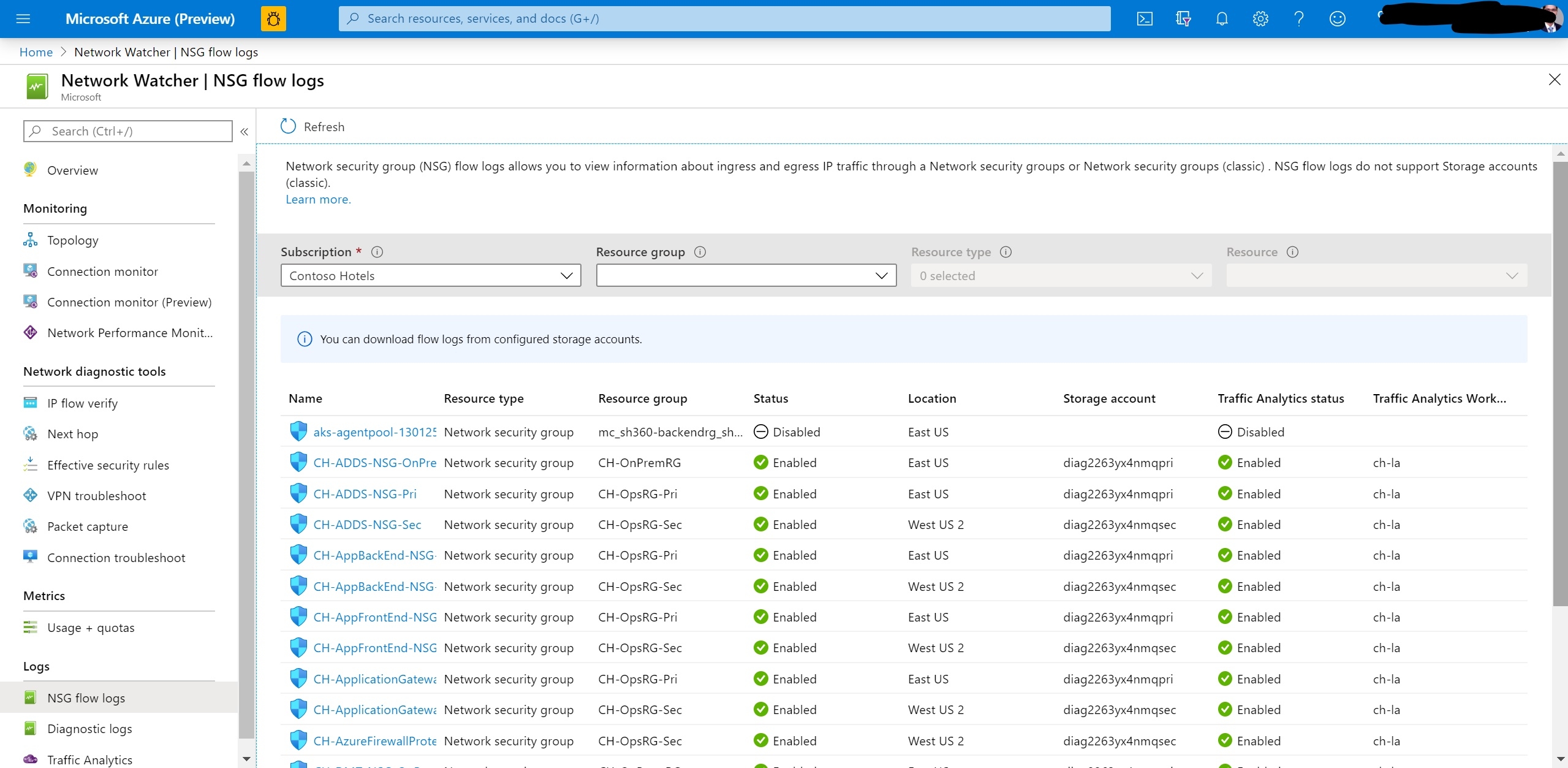

Flow Logs

Another core logging service is Flow Logs. These logs provide visibility of the traffic within your cloud environment, specifically, it captures “information about the IP traffic going to and from network interfaces in your VPC”. AWS’s VPC Flow Logs contain:

- Account-IDs

- Network Interfaces

- ACCEPTed and REJECTed Traffic

- To include the source and destination IP addresses and ports

- TCP flag sequence

- IPv6 traffic

AWS’s VPC Flow Logs can also capture traffic through NAT and transit gateways.

Azure’s NSG Flow Logs and GCP’s VPC Flow Logs collect similar data points in their respective flow logs, with some differences.

Flow Logs do have some limitations, though. Unfortunately, they do not capture all network traffic like traditional networks. But keep in mind that cloud-native technologies and applications are fundamentally different from traditional physical servers and network equipment. With cloud computing, everything is driven by software, i.e., Anything as a Service (XaaS). That means that everything is code; Infrastructure as Code and Software-Defined Networking. My main point here is that much of what is missing with Flow Logs can arguably be made up for with other services and application layer logs.

One of those services that can help to close some of the gaps with Flow Logs is Traffic Mirroring. Traffic Mirroring is a relatively new service and is similar to a traditional network tap. Depending on your specific operational use case, you might decide to implement just one of these network logging services, or both.

For a quick comparison, pulled from Day 4 of SEC488, between VPC Flow Logs and VPC Traffic Mirroring:

- Flow Logs collect network metadata :: Traffic Mirroring captures raw network payloads

- Flow Logs can be sent to CloudWatch Log Groups :: Traffic Mirroring can be sent to an Elastic Load Balancer, EC2 Instances running Security Onion or Zeek, or a Virtual Appliance running Splunk

- Flow Logs are limited to two configurations/VPC :: Traffic Mirroring destinations require using AWS Nitro instance types

Now, the only thing missing from a Who, What, Where, When, Why, and How incident response or investigation is the Why. But wait…. when these logging services with other security monitoring and analytics, the Why in our quest towards ultimate visibility starts to come into the picture.

Log Monitoring and Dashboards

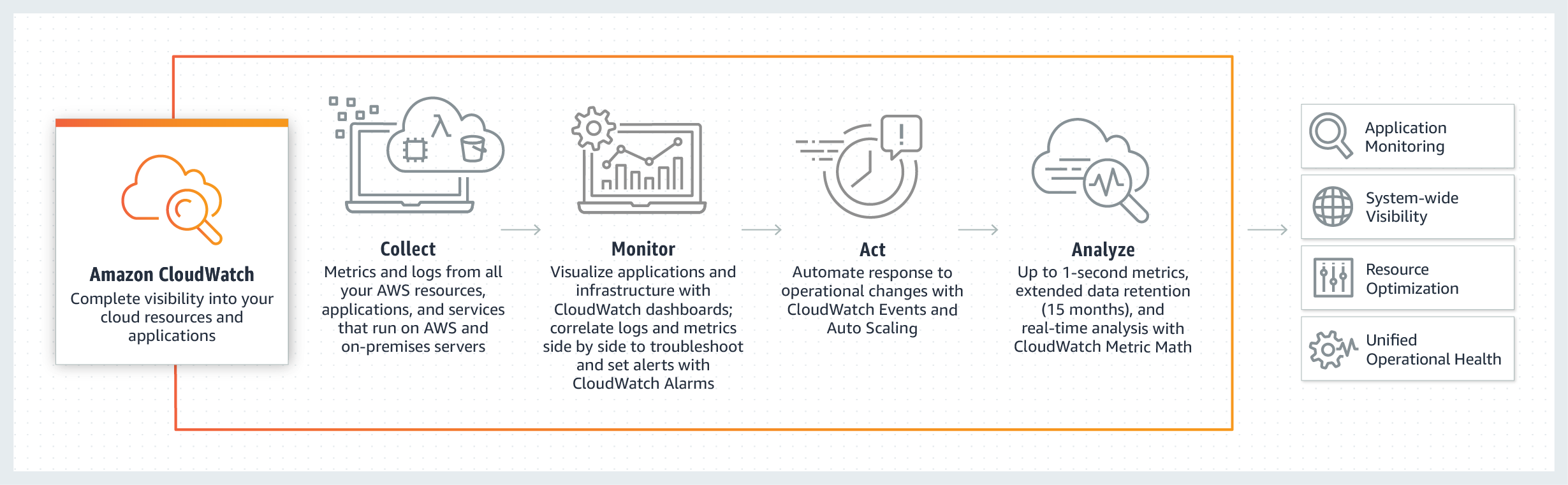

Log monitoring and dashboarding services are at the core of any good operational and security program. Services like Amazon CloudWatch, Azure Monitor and GCP’s Stackdriver Monitoring collect/ingest metrics, logs, and events from many of the other resources and applications in the cloud computing environment. The data is digested and analyzed, and can then be visualized through various dashboarding capabilities. This allows for further analysis, alerting, automated incident response and recovery, and other actionable insights for both technical and business leadership teams.

In addition to collecting metrics and logs from other cloud resources and applications, these services are also able to collect system-level data from EC2 Instances/Cloud VMs, other compute resources, and even on-premise servers. This can be accomplished through the use of Host Log Forwarding Agents. These forwarding agents collect and forward system-level logs and metrics across various operating systems and even in-guest metrics. Additionally, custom metrics from applications and services can be collected using the StatsD and collectd protocols.

This is an excellent way to work towards centralizing all of your logs and metrics, which allows for better analysis of the whole computing environment. Without visibility of the entire computing environment, you have no way of knowing about any backdoors that may have been left open. You can’t protect what you don’t know about….. and you certainly cannot detect or respond to an event if you do not have any log (record) of it ever happening.

Another great feature of CloudWatch is the ability to visualize the data through dashboards and metric alarms. Metric alarms allow teams to set operational high and low thresholds that can trigger alarms when metrics cross the respective thresholds. These alarms would indicate when something is operating outside of predetermined bounds and should be further analyzed and possibly responded to.

AI Threat Detection

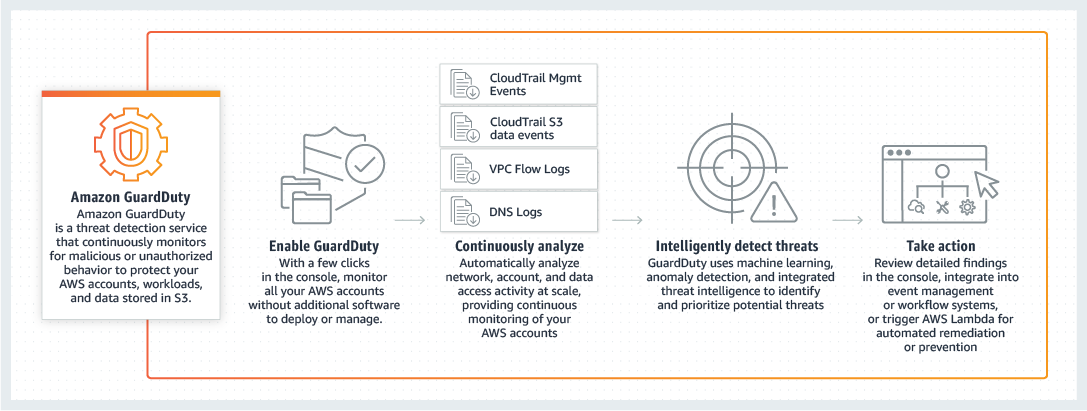

Now that we have a solid foundation of logging and monitoring in place, we can expand our detection efforts with various artificial intelligence services like AWS GuardDuty, Azure Advanced Threat Detection, and GCP Event Threat Detection.

AWS GuardDuty is a “threat detection service that continuously monitors for malicious activity and unauthorized behavior to protect your AWS accounts, workloads, and data stored in Amazon S3. The service uses machine learning, anomaly detection, and integrated threat intelligence to identify and prioritize potential threats.”

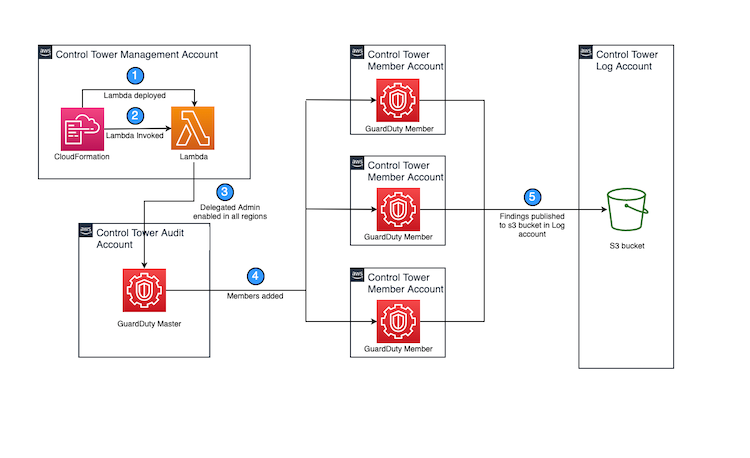

GuardDuty also integrates with CloudWatch for actionable alerts and is easy to aggregate across multiple accounts for a full-picture view of your entire AWS portfolio of accounts. One convenient feature to help achieve complete account coverage and visibility is that you can automate its use for Control Tower managed environments. This diagram describes the components of the solution, which can be deployed using an AWS CloudFormation template. (Zhou, 2020)

A couple of other excellent value-adds of GuardDuty include that it “comes integrated with threat intelligence feeds from AWS, ClowdStrike, and Proofpoint.” Response and recovery efforts can be automated. And, it is easy to scale and centrally manage.

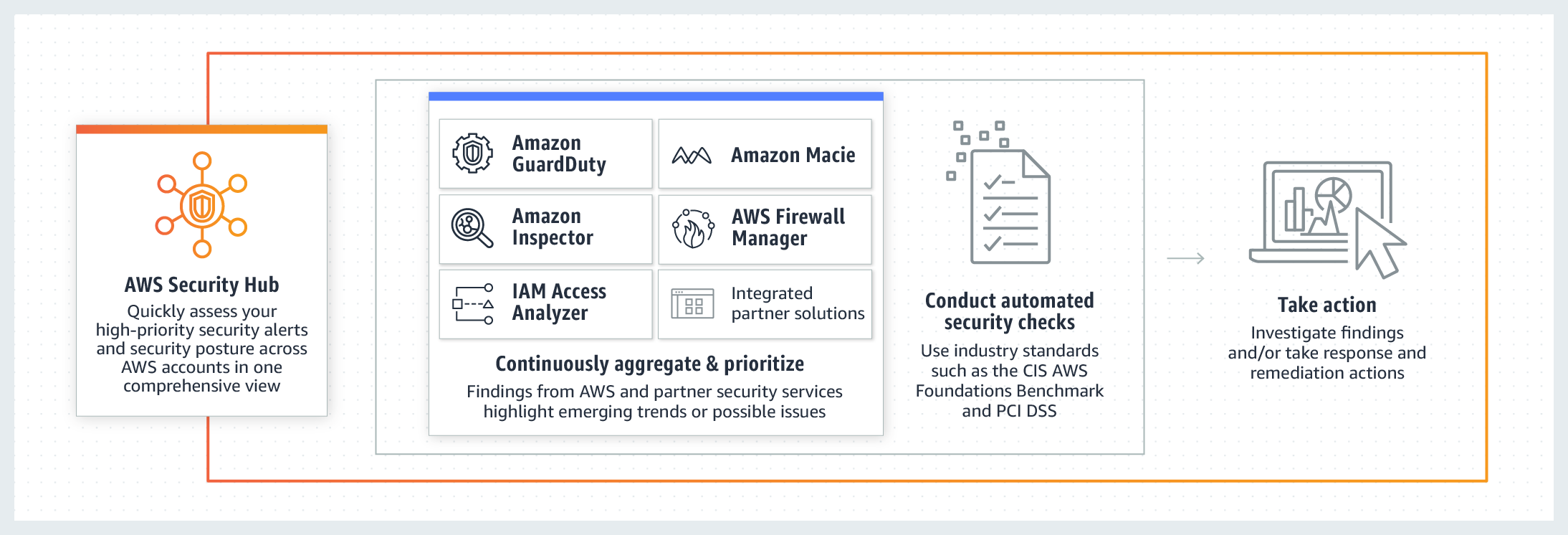

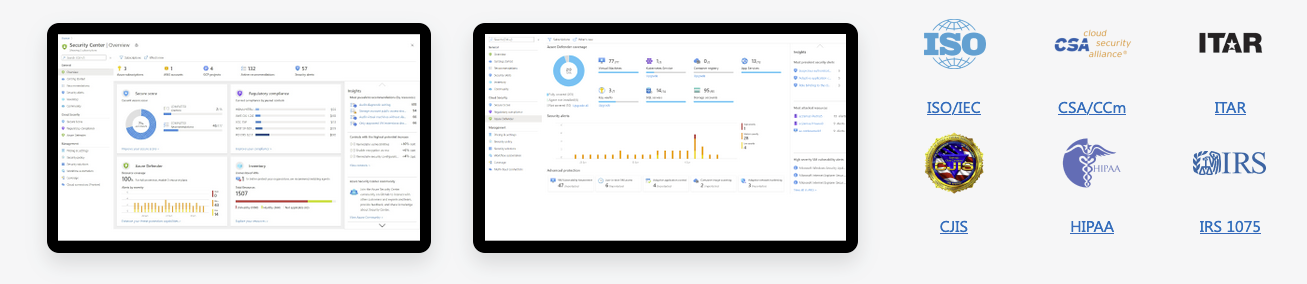

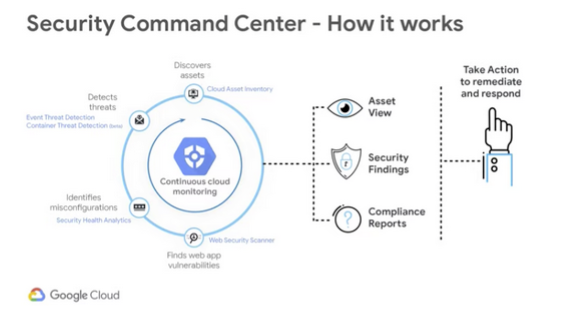

The Single Pane of Glass

Oftentimes it can be a bit of a difficult chore, or maybe a labor-of-love, to juggle all of the security tools and systems that must be maintained in order to have full visibility into an organization’s security and compliance posture. That effort continues to exist, and is possibly even more challenging, in cloud computing environments. Each of the Big 3 offer a solution to assist with this effort. AWS Security Hub, Azure Security Center, and GCP Security Command Center. At their core, these services are bringing alerts and findings from many other systems and provide a centralized location to view, analyze, and respond to events.

What’s great is that we’re now able to get all of our vulnerability scanning and compliance assessments, along with many other monitoring and alerting services all in one place where Security Operations Teams can work from. From these services, you can also evaluate your cloud environment, applications, and other systems against industry best practices, GRC Frameworks, and other regulations and standards. Again, this works to provide a simplified view of your cloud environment’s security and compliance posture.

These are just a few of the services that are attempting to tie everything up together so that we can see everything we want to see in order to make better, faster, risk-based decisions.

Final Thoughts...

To accomplish the same level of visibility in traditional on-premise computing environments would take a significant amount of time, money, and resources; all of which are limited in today’s speed of business. Cloud technologies have made it easier than ever to build a first-class security program with ease, and maturing your logging and monitoring efforts can often be accomplished with just a few clicks or code changes to enable services. With just these few services, we can get pretty close to seeing everything we want to see, yet there’s still so much more that can be done with a little imagination and elbow grease. In the cloud, you’re only limited by your imagination.

Come check out SEC488: Cloud Security Essentials to learn more about these services and many others that can help you build and secure your public clouds.

About The Author

Jonathan Kirby is a Cloud Security and DevSecOps Consultant with JKirby Security, as well as a Cloud Security Engineer and Security Program Manager at Digital Media Solutions. He helps teams build security into their processes, workflows, pipelines and culture through a risk based, holistic and collaborative approach. Jonathan is passionate about leadership, cloud security automation, DevSecOps, as well as the human elements of security. He holds multiple industry certifications including GSLC, GCSA, GSNA, and GSEC.