SEC595: Applied Data Science and AI/Machine Learning for Cybersecurity Professionals

Experience SANS training through course previews.

Learn MoreLet us help.

Contact usBecome a member for instant access to our free resources.

Sign UpWe're here to help.

Contact UsWhile many cloud attacks are similar to traditional on-prem attacks, there are also novel cloud attack techniques of which to be aware.

This blog post is the second in the two-part series on cloud attack scenarios. You can access part one in the link below.

In part one of this series, you saw that threat actors targeting cloud workloads may do so in a similar manner to attacking on-premises infrastructure. In this blog post, we will discuss two (of limitless) techniques commonly used by attackers that are quite different than what you would find in a more traditional, on-premises network.

There are two unconventional cloud credential theft techniques that I would like to cover in this blog, and lucky for me, I have already written a blog post on the first unique-to-cloud capability that attackers will focus their efforts on: Instance Metadata Services (IMDS). You can find that blog here.

Another unique attack vector can be a simple one: trying to find credentials on a compromised system. There is an expectation, of course, that the attacker has successfully accessed a cloud administrator, cloud engineer, or developer workstation or otherwise obtained the credentials another way. For instance, accessing these credentials can be done by:

Let’s focus on the attacker having access to the workstation. A best practice for attackers (and penetration testers, for that matter) is to enumerate the compromised system looking for either “the loot” that they may be after in the first place or the ability to pivot to other, more sensitive user accounts, networked systems, or environments like a cloud account.

In the case of AWS, Azure, and GCP, Table 1 below shows the common locations where these plain-text credentials (yes, you read that right) are stored when using command line interface (CLI) tools or software development kits (SDKs) from these vendors. Two notes before taking a look at Table 1:

Credential Location | Description |

~/.aws/credentials | AWS access key ID and secret access key (plaintext) |

~/.azure/msal_token_cache.json | Azure access and refresh tokens (plaintext) |

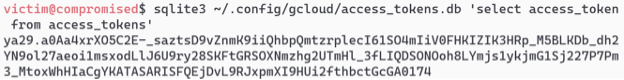

~/.config/gcloud/access_tokens.db | SQLite database containing GCP access tokens (may as well be plaintext) |

~/.config/gcloud/credentials.db | SQLite database GCP bearer tokens (ditto) |

You can likely imagine how easy it would be to access this information as an attacker. The most difficult would be GCP, not because it is protected any better than AWS or Azure tool-generated files, but because it requires the attacker to understand how to connect to a SQLite database and query it for the information they are after. Spoiler alert: it is not that hard as you can see in Figure 1 below.

To defend against these unconventional cloud credential attacks, first, it is of the utmost importance that you focus on tighter control of credentials. There have been several instances of credentials finding their way into places they should not be like source code or public-facing cloud services. This is why the focus should be on building automated tooling to discover or, better yet, prevent these sensitive files from being placed into these locations.

For example, if you have developers on staff who deploy to cloud services, chances are high they could make a mistake and deploy the credentials with the code. Fortunately, there are purpose-built tools like git-secrets from Amazon (although it can look for any string or regular expression pattern you tell it to, not just AWS credentials) to discover and stop the committing of credential information prior to leaving the development system. This, of course, requires the security team to follow through with the installation and configuration of this software on the endpoints and training your development staff how to use it properly.

As for phishing and social engineering, the defense for this has not changed. If the security team has an opportunity to deploy tools to combat these types of attacks, do it. However, not every tool is perfect at spotting not-so-obvious phishing attempts so providing users with security awareness training to keep their phish-spotting skills sharp does not change when moving to cloud (or any type of environment).

Another attack technique that can impact both on-premises and cloud environments alike is mining crypto currency. Now, the impact of these types of initiatives may not be at the forefront of your security concerns but if you pay for your cloud services, it should be. That’s because crypto mining operations, to be of value to an attacker, require an enormous amount of compute resources—compute resources that your organization is paying for should it be compromised by a crypto mining attack. In general, it costs more money in cloud spend to perform crypto mining than what is given in return, but the attacker does not care as, again, you are footing the bill.

You may be wondering how these operations occur. Well, let’s first assume the attacker has access to your cloud account (and may even be using some of the techniques mentioned in this blog as well as Part 1 in the series). Once they have access and the appropriate level of permission, they have a few options. The first option would be the most obvious to many: deploy VMs or container instances to perform the crypto mining using attacker-deployed software. This is the tried-and-true method as seen in the 2017 Tesla breach. The attacker accessed a Kubernetes cluster due to an extreme lack of security controls (and by lack, I mean no authentication whatsoever to a Kubernetes web interface which granted immediate administrative control to the cluster and all its components). From there, they simply deployed a crypto mining container instance and were quickly caught.

But now, one of the most novel crypto mining campaigns to date was performed by an attack group known as AMBERSQUID. As reported in an excellent piece by Alessandro Brucado at Sysdig, these attackers took a vastly different approach. At a high level, the attackers started with access to a cloud account and deployed:

That is a lot of crypto mining! Would you recognize these services being used in the above ways? It is tricky to spot to say the least—especially if you are doing a poor job of generating and monitoring cloud metrics and log data.

Since there is so much going on in the example AMBERSQUID attack, let’s break down the defenses by making a few key recommendations for both prevention and detection of these types of attacks. For prevention, one of the mantras that predates cloud is, “If you are not using a service, turn it off.” The same applies here, but how do you “turn off” a cloud service? In some cases, like in GCP, you can disable the API. In other environments, the APIs are freely there, so you will need to think slightly outside the box. AWS provides what are called Service Control Policies (SCP) and Azure has the Azure Policy service which can limit account- or tenant-wide what your users can and cannot perform. A simple fix action would be to create a policy which denies access to services not in use across the organization.

You can also use the above policies to restrict how much hardware a compute resource can consume because, at the end of the day, if a cloud vendor has to compute, store, or send data out of its environment, you are very likely paying for it. And the more the vendor computes, stores, or sends across a network, the more you will pay. So, ask yourself the following question, “Do your users even need to spin up a 128 virtual CPU system?” If the answer is “no” or “it depends,” you need to restrict who, if anyone, can spin up large systems through policies.

From the detection side, a common mantra in the cloud space (and from the Center for Internet Security (CIS) with their first two Critical Controls), is to “know thy network.” This network would now include cloud accounts and services. And by knowing the network here, we are not just talking layers 3 and 4 traffic (although that is important to understand and monitor too), we are focused also on Identity and Access Management (IAM) users, groups, permissions, which services are in use, and how these services are used (by monitoring API calls). This will help defenders more easily spot abnormalities. These abnormalities could be an attacker or something more benign like a new capability that you were not tracking but are now aware of.

Easier said than done. You will need to ensure that you are collecting the right telemetry data to learn what is normal. For the most part, cloud vendors do offer some level of API monitoring, but there is so much more you can collect as a customer if you turn it on. Some examples include metrics information related to your VMs, containers, and serverless functions (i.e., customer-provided code that executes on vendor infrastructure when triggered by an event), network flow logs, cloud service proxy logs, cloud storage access logs, and other services that record their interactions.

In this blog series, we found that attackers, as always, are getting crafty and "thinking outside the box." If they can mine crypto, they will. If they can hold it for ransom, they will. If they can steal it and sell it, they will. If they can wreck your environment because they do not like you, they will.

We must, at a minimum, begin to address the following questions BEFORE we migrate to cloud:

To better understand the latest tactics, techniques, and processes attackers are using, reference MITRE ATT&CK, subscribe to threat feeds, and stay on top of the latest breach information.

To ensure you and your cloud security team are prepared with the knowledge, strategies, and capabilities to prevent attacks against your organizing’s cloud infrastructure, I have authored and teach the SANS SEC502: Cloud Security Tactical Defense and co-authored and teach the SANS SEC541: Cloud Security Threat Detection course. I hope to see you in class!

Ryan’s extensive experience, including roles as a cybersecurity engineer for major Department of Defense cloud projects and as a lead auditor, underscores his dedication to enhancing the security posture of critical systems.

Read more about Ryan Nicholson