SEC595: Applied Data Science and AI/Machine Learning for Cybersecurity Professionals

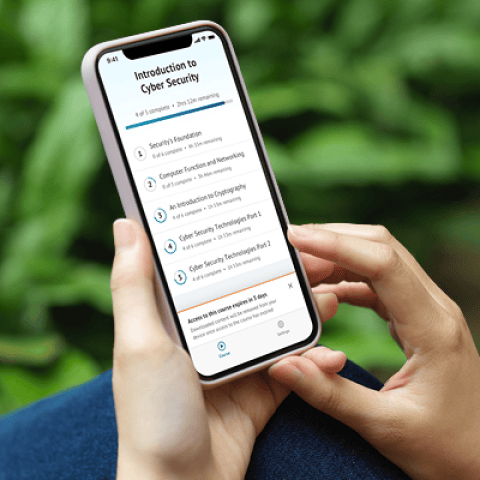

Experience SANS training through course previews.

Learn MoreLet us help.

Contact usBecome a member for instant access to our free resources.

Sign UpWe're here to help.

Contact Us

Apply your credits to renew your certifications

Attend a live, instructor-led class at a location near you or remotely, or train on your time over 4 months

Course material is geared for cyber security professionals with hands-on experience

Apply what you learn with hands-on exercises and labs

Gain hands-on skills in Detection Engineering and SIEM, learning the processes for understanding logs, enhancing existing logging solutions, and creating detection content that fits your needs.

SEC555 teaches excellent, pertinent information along with practical, easy-to-follow lab exercises. A tremendously valuable course!

SEC555: Detection Engineering and SIEM Analytics is a hands-on detection engineering training course that teaches students how to design proactive detection strategies and effectively manage SIEM platforms. Through real-world labs and in-depth analysis, participants learn to interpret logs, craft high-quality detection rules, and uncover hidden threats in both cloud and on-premises environments. Whether you're new to detection engineering or looking to sharpen your skills, this course prepares you to extract meaningful insights from complex data and build a more responsive, intelligence-driven Security Operations Center (SOC). It also serves as a valuable preparation path for the GCDA certification (GIAC Certified Detection Analyst), which validates advanced capabilities in detection engineering and data-driven defense.

Download the Detection Engineering poster for a visual walkthrough of the Detection Engineering Life Cycle in action.

Nick Mitropoulos is a SANS Certified Instructor and author of SEC555: Detection Engineering and SIEM Analytics. As CEO of Scarlet Dragonfly and a veteran of SOC and incident response leadership, he equips students with real-world skills in detection engineering. Nick also serves on the GIAC Advisory Board, SANS CISO Network, and faculty of the SANS Technology Institute.

Read more about Nick MitropoulosExplore the course syllabus below to view the full range of topics covered in SEC555: Detection Engineering and SIEM Analytics.

Section one builds a strong foundation in Detection Engineering and SIEM, covering core concepts, best practices, and modern logging techniques. It prepares students to analyze logs effectively and create agile, scalable detection systems for today’s threat landscape.

This section covers how to collect and enrich logs from key protocols like DNS, SMTP, and HTTP/HTTPS. It also dives into endpoint logs for detecting malicious activity on Windows and Linux systems. Lastly, host-based firewalls and login events are also explored.

This section focuses on methods for maintaining accurate asset inventories and identifying unauthorized devices. Students will learn to combine data sources for a clear network view and gain hands-on experience with baselining and anomaly detection to spot threats like C2 activity or suspicious behavior.

This section focuses on building strong cloud visibility across platforms like AWS and Azure. Students will explore key log types, learn to detect attacker activity, and optimize configurations to close monitoring gaps—ensuring effective defense and rapid response in cloud environments.

This section highlights how to centralize and correlate logs from diverse sources to enhance context and prioritization. It also covers building an automated detection engineering pipeline to streamline operations and speed up the creation of effective detections.

Responsible for planning, implementing, and operating network services and systems, including hardware and virtual environments.

Explore learning pathThis role tests IT systems and networks and assesses their threats and vulnerabilities. Find the SANS courses that map to the Vulnerability Assessment SCyWF Work Role.

Explore learning pathAnalyze network and endpoint data to swiftly detect threats, conduct forensic investigations, and proactively hunt adversaries across diverse platforms including cloud, mobile, and enterprise systems.

Explore learning pathThis job, which may have varying titles depending on the organization, is often characterized by the breadth of tasks and knowledge required. The all-around defender and Blue Teamer is the person who may be a primary security contact for a small organization, and must deal with engineering and architecture, incident triage and response, security tool administration and more.

Explore learning pathSecurity Operations Center (SOC) analysts work alongside security engineers and SOC managers to implement prevention, detection, monitoring, and active response. Working closely with incident response teams, a SOC analyst will address security issues when detected, quickly and effectively. With an eye for detail and anomalies, these analysts see things most others miss.

Explore learning pathAssess the effectiveness of security controls, reveals and utilise cybersecurity vulnerabilities, assessing their criticality if exploited by threat actors.

Explore learning pathAs this is one of the highest-paid jobs in the field, the skills required to master the responsibilities involved are advanced. You must be highly competent in threat detection, threat analysis, and threat protection. This is a vital role in preserving the security and integrity of an organization’s data.

Explore learning pathEnroll your team as a group or arrange a private session for your organization. We’ll help you choose the format that fits your goals.

This course uses real-world events and hands-on training to allow me to immediately improve my organization’s security stance. Day one back in the office I was implementing what I learned.

The course content is simply incredible, and I will likely be referencing it for years to come! Also, the provided VM is top notch. The labs were really informative.

Overall, this course taught me so much about using a SIEM. I had limited knowledge and skill on using our Security Onion setup, but this course helped developed them with the techniques and concepts taught. It also told me about tools I didn't know we had in our setup. Everyone I work with is rather new to the defensive cybersecurity realm, so there's been a lot to learn.

Get feedback from the world’s best cybersecurity experts and instructors

Choose how you want to learn - online, on demand, or at our live in-person training events

Get access to our range of industry-leading courses and resources