SEC595: Applied Data Science and AI/Machine Learning for Cybersecurity Professionals

Experience SANS training through course previews.

Learn MoreLet us help.

Contact usBecome a member for instant access to our free resources.

Sign UpWe're here to help.

Contact UsPart one of a four-part blog series about what’s needed to shake, shimmy, and shift left

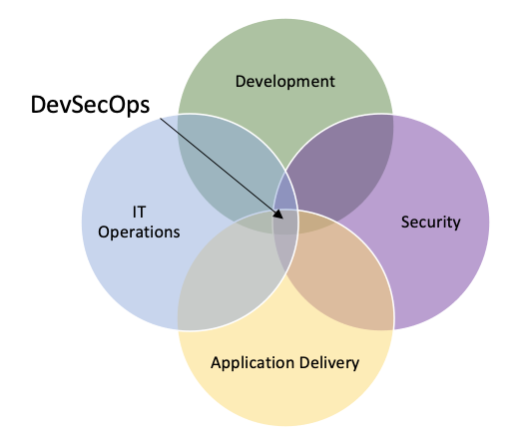

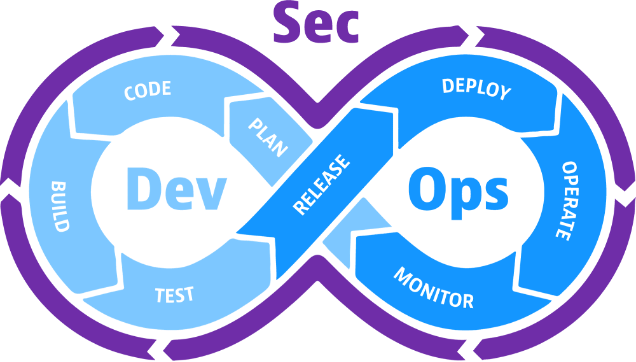

DevSecOps is shorthand for Developer, Security, and Operations (also known as “Devops Security, Secure DevOps” or “SecDevOps”). DevSecOps is an extension of DevOps that codifies security as part of the larger goal structure. To achieve this, DevSecOps frames security as a shared responsibility and something that should be seamlessly integrated, as opposed to an isolated department implementing cumbersome tools, stopgaps, or other means that inhibit development.

In the wild world of technology, security experts have no shortage of jargon, alphabet soup, and a constant flood of new industry terms to learn. Depending on how you look at it, a handful of these denominations can be chalked up to powerful marketing tools or buzzwords, some actually make sense from a technical perspective, while others borrow a little bit from Column A and a little bit from Column B.

What about Shift Left?

Are these concepts and terms helpful in the grand scheme of things?

Spoiler Alert: Absolutely!

Starting from the top, what the heck is DevOps? Or DevSecOps for that matter? How are they similar? How are they different? Are they pretty much the same thing? What benefits do they provide?

Let’s break it down!

Before we get into the nitty gritty of the differences between DevOps and DevSecOps, I want to quickly summarize how application development has changed and how those changes helped to shape these methods. Know that these explanations don’t cover the entirety of the application development history but serve as highlights to understand how it started compared to where we are at today. That said, there might be a few things missing here and there, but for the sake of brevity, not every detail, process, component, or iteration will be covered.

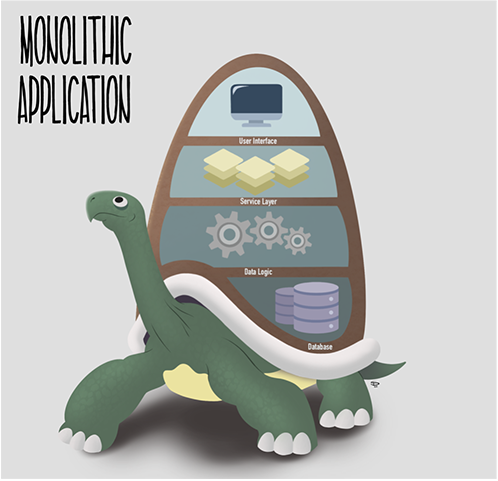

Picture a time long, long ago when the internet was younger, more bright-eyed and bushy-tailed— Application architecture was a very different animal. In fact, think of it like a giant tortoise: Large, cumbersome, yet long-lasting and efficient in its own way.

This type of architecture was referred to as monolithic. The phrase references a traditional unified model for the design of a software program. Often, monolithic applications were written in a single programming language, had relatively large code bases, and complex designs. Each necessary component is coupled together within the confines of the application.

Though monolithic applications were more prevalent in years past, especially in the case of older applications that have stood the test of time, they do still exist today.

Now, monolithic applications did and still do have their benefits, relatively speaking. Development and deployment can be done at a relatively fast pace, testing and debugging is more simplistic, and there is not much overhead from a headcount perspective.

However, that comes with some downsides, too.

As the saying goes, “The bigger they are, the harder they fall.”

As a result of that tightly coupled nature, if anything needed to be changed within the application, it required testing and recompiling the entire application— meaning, there would usually be significant downtime if any updates or maintenance was needed.

With the realization that databases quickly became resource-intensive components of an application, software architects began uncoupling databases from the confines of the application itself. As much as that was helpful and necessary, it didn’t go far enough. The next logical step was to incorporate a load balancer and distribute replications of the existing data logic across several instances, all leading back to the same database. This way, a single machine would not be overwhelmed by requests. However, the design still had many of the same trappings as prior iterations when it came to handling maintenance and upgrades.

As things progressed, more and more pieces of the proverbial puzzle fell into place. This uncoupling led to utilizing more flexible and scalable means of infrastructure as well as a shift in architectural design. More organizations came to embrace the cloud and development inevitably moved in that direction, toward the scalability, plasticity, and dexterity it offers.

This is where service-oriented architecture comes in.

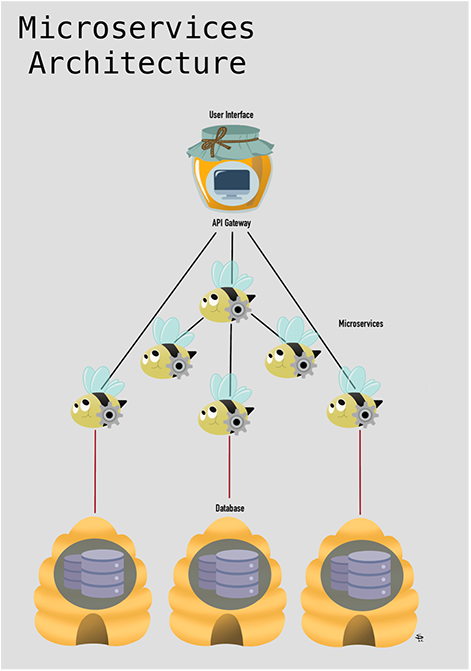

Instead of focusing on a unified, monolithic design, service-oriented architecture acts as a modular means of breaking up monolithic applications into smaller components. From there, microservice-oriented applications rose to popularity. This type of schema is generally referred to as an evolution of service-oriented architecture.

Microservices break data logic into small, well-encapsulated services that are distributed over several devices. These loosely coupled ephemeral services, though completely independent, work together to carry out tasks, much like how worker bees cooperate to accomplish a common goal. These microservices communicate via application programming interfaces (APIs) and are mobilized in line with a particular business function or domain.

A microservice addresses a single concern: Certain microservices perform data searches, some handle logging functions, while others execute web service functions. The list goes on.

In traditional microservices architecture, each service gets its own database. In some cases, a handful of microservices may share a database. In general, best practice when using microservices is for each microservice to have its own database. That is the “rule,” but as always, there are also exceptions to the rule. Microservices architecture is flexible and very dependent on your specific needs and requirements.

Speaking of flexibility, unlike their monolithic predecessor, microservices can be changed and updated independently and don’t need the entirety of the application to be recompiled. On one hand, it’s a lot easier to manage a variety of aspects of an application using this design; it’s also a matter of exchanging operational complexity for scalability, elasticity, and autonomy. Of course, operational complexity means there are a lot more steps and resources involved in telling each bee what their job is and how the bee interacts with its environment— which requires more developers, automation and orchestration efforts, source code management, testing and quality assurance processes, and so on. With that, there are a number of additional overhead considerations, infrastructure costs, a lack of standardization, debugging challenges, culture changes, and a potential lack of ownership that may arise when moving toward using microservices.

To connect these points together, at a high level, we’ve gone from tightly coupled and largely holistic applications to more uncoupled, flexible, and nimble stratagems. During this time, the transference from on-premises development to cloud-native development became gradually more prolific.

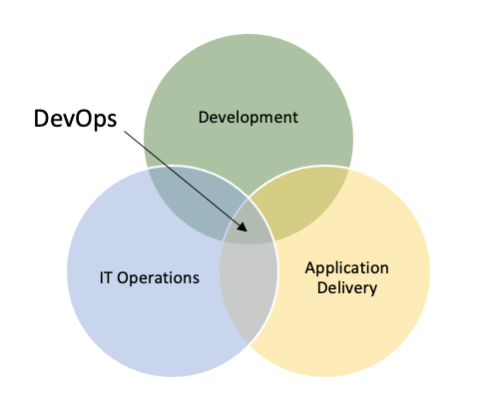

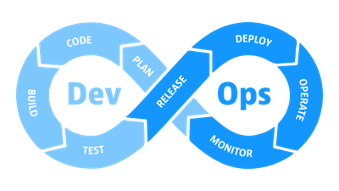

The term DevOps stands for Developer Operations and serves as a set of practices, tools, and philosophies that combines Software Development, Application Delivery, and Information Technology (IT) Operations.

This is meant to be a full Software Development Lifecycle (SDLC) investment that enables better development while adopting the practices of Continuous Integration and Continuous Deployment (CI/CD), quality assurance, testing, logging and monitoring, automation, dynamic feedback, as well as holistic orchestration, communication, and collaboration across teams.

Think of it like this: In DevOps, trust and automation rule. DevOps serves to improve collaboration across teams and focuses on delivering value to improve the overall business.

The way DevOps looks can vary from organization to organization in terms of what teams and roles are involved. What remains consistent is the involvement of roles within the functional areas of product, engineering, information technology, operations, quality assurance, and project management.

With so many cooks in the kitchen, how these steps are tackled and how the project is managed makes all the difference.

Obviously, DevOps doesn’t exist in a vacuum, nor does it have to be the only approach an organization or team uses to accomplish its development goals. Hybrid approaches are a popular way to account for the distinct means to maintain complex and dynamic workflows. Sometimes, Agile and Scrum can be complementary in achieving these undertakings.

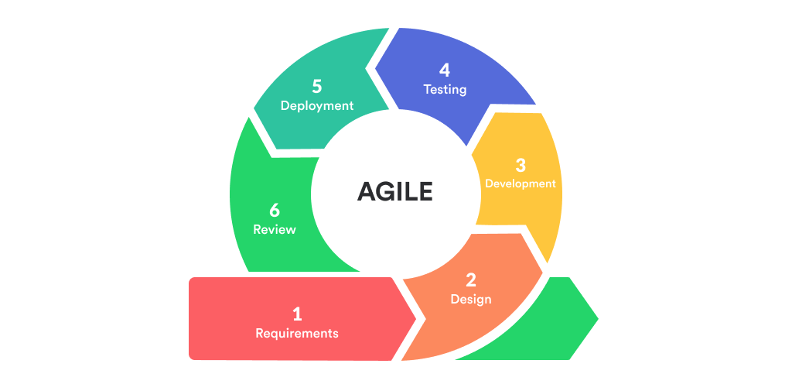

Agile refers to a philosophy that leverages empirical, iterative, and incremental processes. The goal of Agile is to promote trust and create early measurable Return on Investment (ROI) through well-defined delivery of product features.

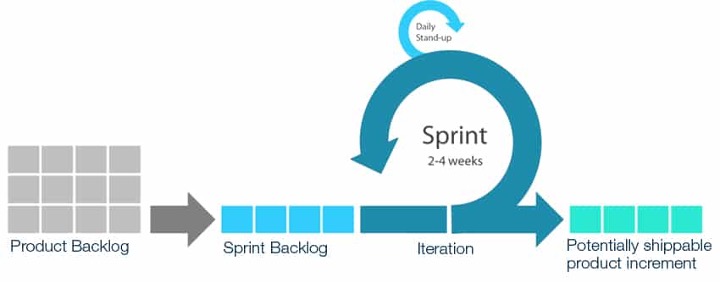

Scrum is a specific agile development methodology used by project managers that centers on constant communication and feedback. Scrum is a framework for getting work done, whereas agile is more of a mindset.

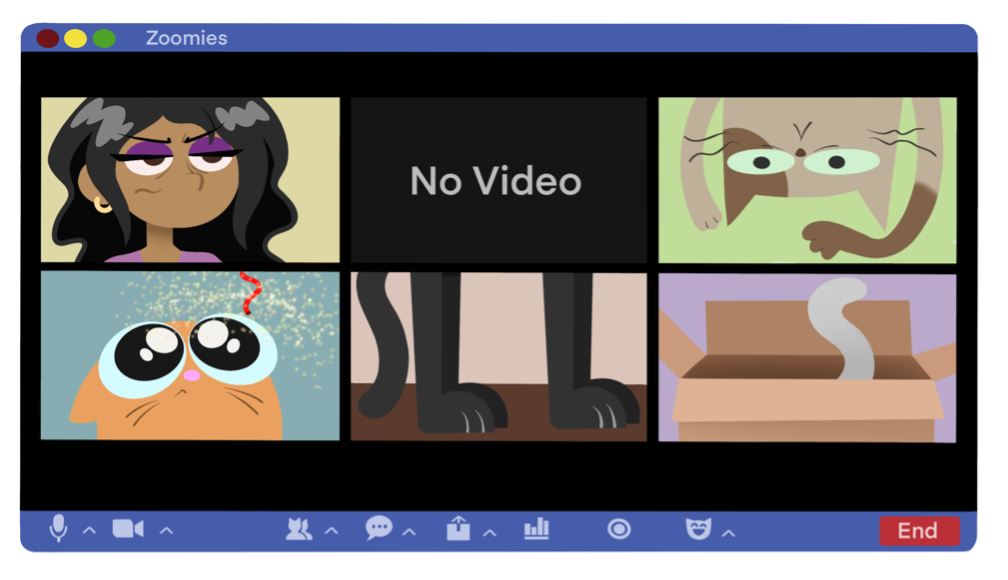

With so many moving parts and silos to break down, project managers might feel like they’re herding cats. Since everyone has their own objectives and priorities, it can be difficult to make sure each person or team is on the “same page.” With the correct knowledge, tools, and frameworks, project managers can align the necessary incentives, motivators, and guidance that makes a difference in how cats interact with the world around them (and your furniture).

It is critical to understand how to leverage a wide range of development approaches and project management framework components to maximize resources across various business units. MGT525: Managing Cybersecurity Initiatives and Effective Communication is a great course for leaders and project managers alike, as it meticulously covers these concepts and the application thereof in-depth. Project management is lucrative because it brings guidance and direction to projects. Learning how to confidently lead development initiatives will impact your ability to deliver on time and within budget, while also reducing organizational risk and complexity – all with driving bottom line value in mind.

The long and short of it is, DevOps is based on a cooperative cultural philosophy that supports the agile movement in the context of a system-oriented approach.

You might still be wondering: “Where does DevSecOps come in?”

As promised, it’s time to define DevSecOps.

If DevOps is a portmanteau of Developer and Operations, I’m sure you’ve already guessed that DevSecOps means Developer Secret Operations…

[Wait, no, that’s not right…]

*Aherm*

DevSecOps is shorthand for Developer, Security, and Operations (also known as “Secure DevOps” or “SecDevOps”).

DevSecOps is the logical conclusion to a missing component of DevOps: security. Putting it simply, DevSecOps is an extension of DevOps that codifies security as part of the larger goal structure. To achieve this, DevSecOps frames security as a shared responsibility and something that should be seamlessly integrated, as opposed to an isolated department implementing cumbersome tools, stopgaps, or other means that inhibit development.

This requires the involvement of security teams in a multi-faceted way. I’ll cover more about the often-constrained relationship between security and developers in the next part, but for now, know that it can be common for these two things to seem at odds with one another. The transition from other methods to DevSecOps may not be the smoothest road, but it is typically the most rewarding.

All in all, incorporating DevSecOps practices yields many benefits that better enable the business, including, but not limited to, faster development cycles, improved security posture, enhanced development team value, and reduction of costs. It’s different from DevOps in the sense that it’s an overall improvement that prioritizes security and supplementary involvement from other business units.

Even now, there are more evolutions and iterations occurring, such as the concept of Platform Engineering, that build on DevOps and DevSecOps, respectively. That’s the beauty of these high-level constructs; there is no sense in reinventing the wheel when the innovation is the tire.

Shift Left is a term used to describe the practice of moving the planning, testing, quality, and performance evaluation functions to earlier in the development process. Similarly, it can convey the concept of adding security and security automation as early as possible. This arrangement does a lot of heavy lifting to help minimize vulnerabilities that reach production, which helps to shorten the feedback loop for developers to address issues and reduces the time and costs needed to fix security flaws.

Think of Shift Left security the same way you’d approach building a house. To start, you need a plan, a blueprint, a team of specialized workers, and a solid foundation to work from. Security is an essential constituent that, in so many words, guarantees intruders are kept out, your house is structurally sound, and most importantly you, your family, and your belongings are safe. If you build a house with security and longevity already in mind, it will be stronger, more resilient, and easier to maintain.

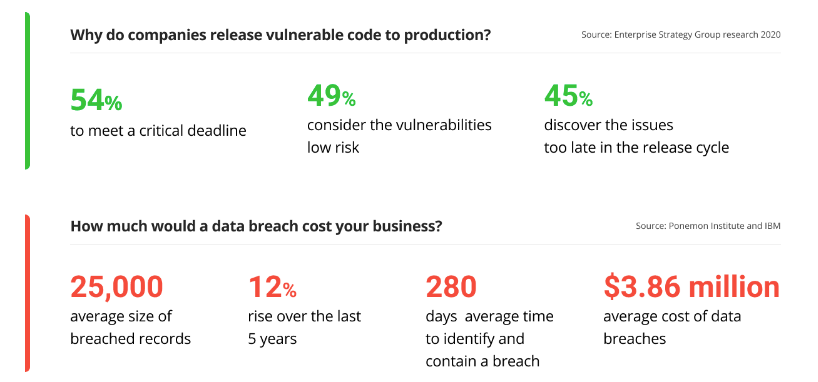

With countless breaches revolving around application vulnerabilities and exploits, the focus on Shifting Left will only become more prominent. According to survey data from the Enterprise Strategy Group on Modern Application Development Security, 54% of companies report releasing vulnerable code into production so that they were able to meet critical deadlines, while 45% did so because they discovered the vulnerabilities too late in the software development release cycle.

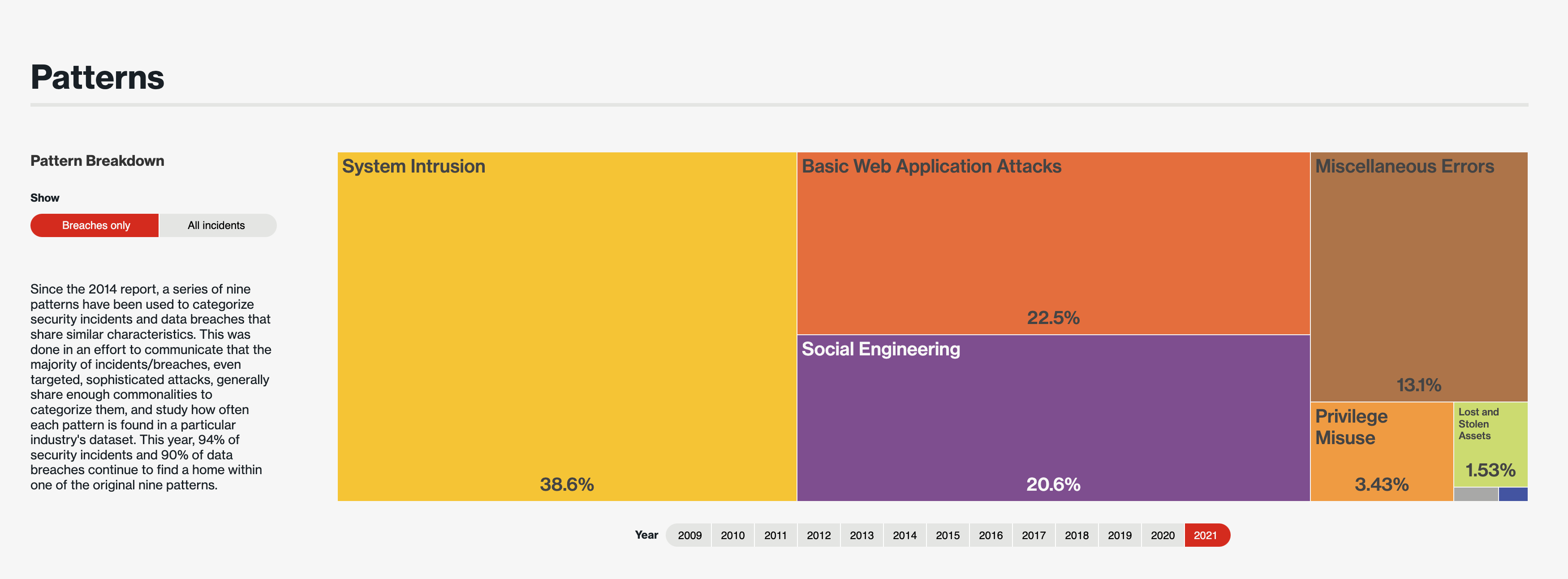

Complementary to that, Verizon’s 2022 Data SecurityBreach Investigations Report identifies Basic Web Application Attacks as the second most common way bad actors have comprised systems, coming in at a whopping 22.5% of security incidents. Verizon describes Basic Web Application Attacks as “Any incident in which a web application was the vector of attack. This includes exploits of code-level vulnerabilities in the application as well as thwarting authentication mechanisms.”

With more high-impact vulnerabilities and exploits like log4j and Apache Struts 2 bubbling to the surface, organizations are buttoning down, incorporating DevSecOps, and embracing a Shift Left mentality for more holistic coverage.

Now that we know the what and the why behind Devops and DevSecOps, our next step is to venture into the details and get a better understanding of how to make sense of each brush stroke that makes up the bigger picture.

Throughout the rest of this blog series, I’ll continue to explore how to look at DevSecOps and Shift Left initiatives from the lens of the people, processes, and technologies involved. The people do the work, the processes make the work more efficient and manageable, and the technology carries out the work helping to accomplish tasks and automate processes. On paper, that sounds easy, but in practice, it takes a lot of thought, effort, and planning.

Look out for “Part 2: The People” to learn more about the human element behind executing successful DevSecOps initiatives.

Note from the writer: I hope you all enjoyed my debut SANS blog! If you liked my doodle-based stylings, reach out to me on LinkedIn to connect. Also, be sure to subscribe to SANS newsletters to stay up to date on all things infosec!

Stacy Dunn is an artist, gamer, blogger, podcaster, powerlifter, and all-around nerd with a passion for everything information security.

Read more about Stacy Dunn