This is a series of blog articles that utilize the SIFT Workstation. The free SIFT workstation, can match any modern forensic tool suite, is also directly featured and taught in SANS' Advanced Computer Forensic Analysis and Incident Response course (FOR 508). SIFT demonstrates that advanced investigations and responding to intrusions can be accomplished using cutting-edge open-source tools that are freely available and frequently updated.

The SIFT Workstation is a VMware appliance, pre-configured with the necessary tools to perform detailed digital forensic examination in a variety of settings. It is compatible with Expert Witness Format (E01), Advanced Forensic Format (AFF), and raw (dd) evidence formats.

Super-Timeline Background

I first started teaching timeline analysis back in 2000 when I first started teaching for SANS. It was in my first SANS@Night presentation I gave in Dec 2000 at what was then called "Capitol SANS" and I demonstrated a tool I wrote called mac_daddy.pl based off of the TCT tool mactime. Since that point every certified GCFA has answered test questions on timeline analysis.

We have reached a new resurgence in timeline analysis thanks to Kristinn Gudjonsson and his tool log2timeline. Kristinn's work in the timeline analysis field will probably change the way many of you approach cases.

First of all, all of these tools will be found in the SIFT Workstation are ready to go out of the box, but you can keep them up to date at Kristinn's website www.log2timeline.net. Kristinn's tool was also recently added to the FOR508: Advanced Computer Forensic Analysis and Incident Response course last year and has already been taught to hundreds analysts who are now using it in the field daily.

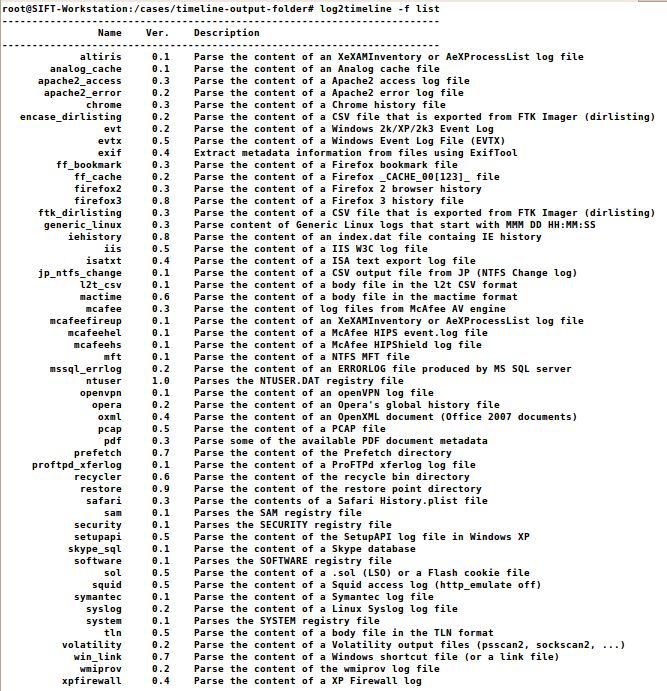

Kristinn's log2timeline tool will parse all of the following data structures and more through AUTOMATICALLY recursing through the directories for you instead of having to manually accomplish this.

This is a list of the currently available formats log2timeline is able to parse. The tool is being constantly updated so to get the current list of available input modules it is possible to let the tool print out a list:

# log2timeline -f list

Artifacts Automatically Parsed in a SUPER Timeline:

How to automatically create a SUPER Timeline

log2timeline recursively scans through an evidence image (physical or partition) and extracts artifact timestamp data gathered from the evidence that the tool log2timeline supports (see artifacts above). This tutorial will step a user who is interested in creating their first timeline from start to finish.

Step 0 — Use the SIFT Workstation Distro

Download Latest SIFT Workstation Virtual Machine Distro: http://computer-forensics.sans.org/community/downloads

It is recommended that you use VMware Player for PCs and VMware Fusion for MACs. Alternatively, you can install the SIFT workstation in any virtual machine or direct hardware using the downloadable ISO image as well.

Launch the SIFT workstation and login to the console by using the password "forensics".

Step 1 — Identify your evidence and gain access to it in the SIFT Workstation

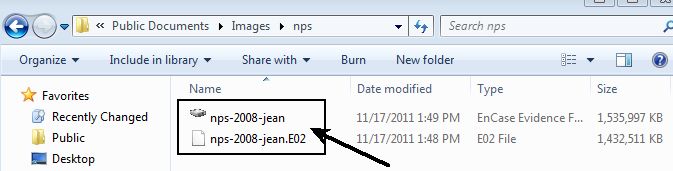

The files used in this example.

- Scenario and Case Goals-> http://digitalcorpora.org/corp/images/nps/nps-2008-jean/M57-Jean.pdf

- Image 1 ->http://digitalcorpora.org/corp/images/nps/nps-2008-jean/nps-2008-jean.E01

- Image 2 -> http://digitalcorpora.org/corp/images/nps/nps-2008-jean/nps-2008-jean.E02

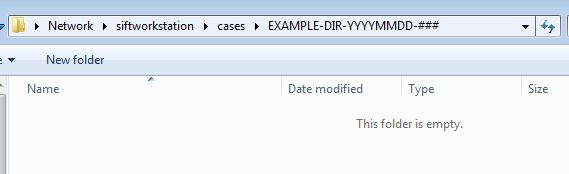

It should be noted that the design of the SIFT workstation has a separate drive for the /cases directory to allow for a larger virtual drive or you can connect it to an actual hard drive as well that you mount at the /cases directory.

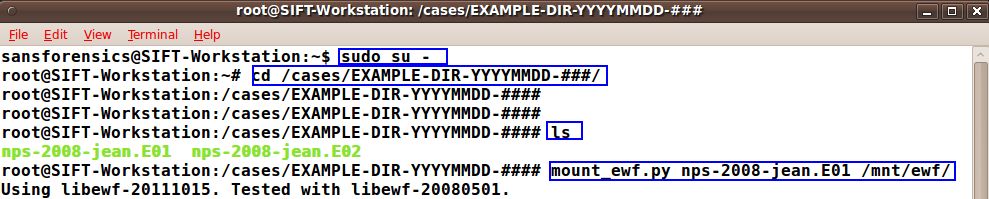

Open in Explorer \siftworkstation\cases\EXAMPLE-DIR-YYYYMMDD-###

If your evidence is a E01 then use this previous article on the topic to mount it correctly inside the SIFT workstation. If your evidence is RAW go ahead and skip to STEP 2. Access to the raw image is required as log2timeline cannot parse E01 files... yet.

- $ sudo su —

- # cd /cases/EXAMPLE-DIR-YYYMMDD-####/

- # mount_ewf.py nps-2008-jean.E01 /mnt/ewf

- # cd /mnt/ewf

Note the commands that are inputted by the forensicator are highlighted in the blue outlined box.

Step 2 — Create The Super Timeline

Manual creation of a timeline is challenging and still requires some work to get through. We have included in the SIFT Workstation an automated method of generating a timeline via the new log2timeline tool that can simply be pointed at a disk image (raw disk). Again, If you are examining an E01 or AFF file, please mount it first using mount_ewf.py or affuse respectively.

Creating a Super Timeline requires you to know whether or not your evidence image is a Physical or a Partition Image. A Physical image will include the entire disk image and can be parsed by the tool mmls to list the partitions. A Partition image will be the actual filesystem (e.g. NTFS) and can be parsed by the tool fsstat to list information about the partition.

Once you have figured out if you have a physical disk image or a partition image, then choose the correct implementation of the command to run with the correct timezone.

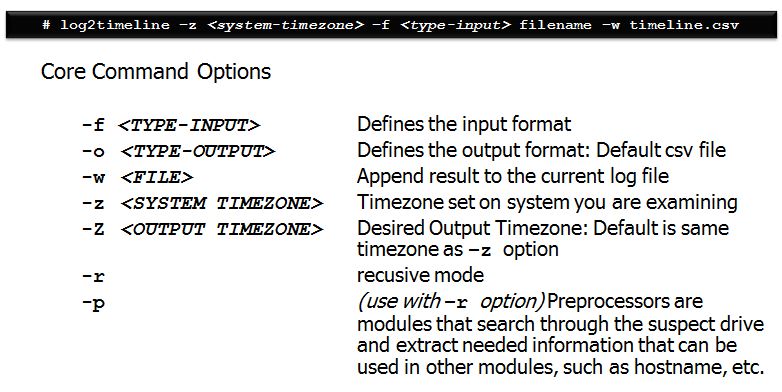

Critical Failure Point Note: There is confusion over what the (-z) time zone option is used for. The (-z) is the time zone of the SYSTEM. The timezone is used to baseline convert time data that is stored in "local" time and will output the data into the same time zone.

Why the need? Certain artifacts, such as setupapi.log files and index.dat files, store times in local system time instead of UTC. Without telling log2timeline what the local system time is, it would slurp up the data from those artifacts incorrectly. To correct this, log2timeline converts the data into UTC but then output the data back into the same —z time zone by default.

The output of your timeline will always be the same time zone as your —z option unless you specify a different time zone using the "BIG" —Z option. This will allow you to convert a system time of EST5EDT to UTC output if you desire to compare computers from two different time zones in a single timeline.

If your time zone includes areas that have daylight savings time, it is important to use the correct location with daylight savings time. For example, on the East Coast, the correct implementation of daylight savings for the timezone value would be EST5EDT. For Mountain Time it would be MST7MDT. log2timeline has autocomplete enabled in the SIFT Workstation, so all you would need to do is type -z [tab tab] to see all the available timezone options that it will recognize. If you do not use this time zone setting correctly with daylight savings accounted for, any local time timeline data that is analyzed in local time will be incorrect. So just to iterate, using EST as your timezone will treat all timestamps as if they were EST. Using EST5EDT (and other similarly named ones) will take daylight savings into account.

In summary: It is crucial that the -z option matches the way the system is configured to produce accurate results.

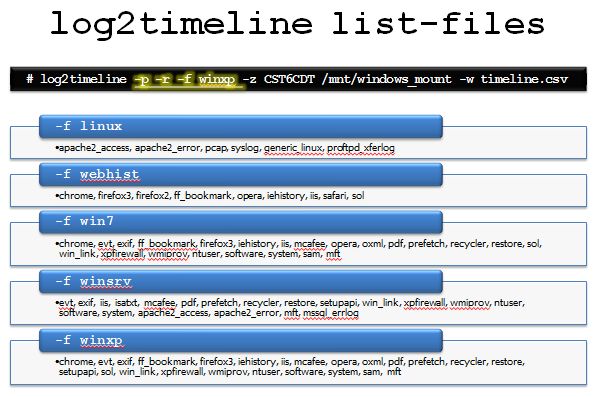

log2timeline LIST-Files

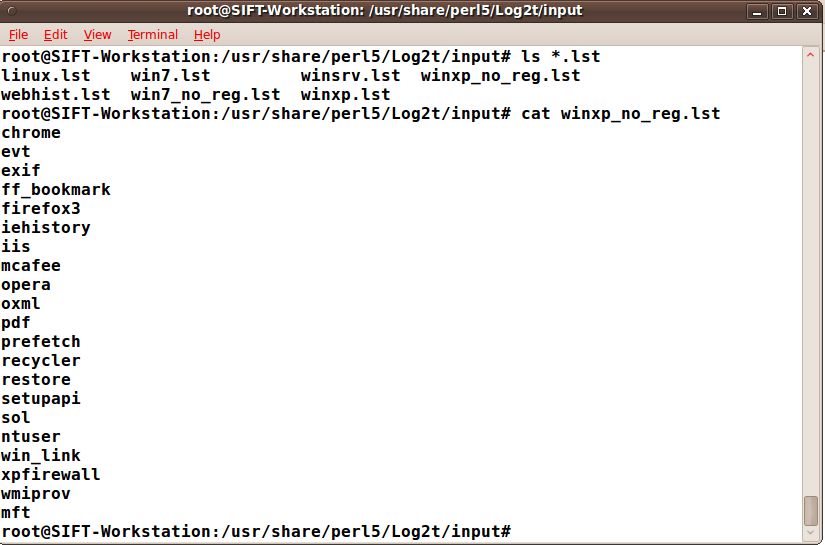

The list files in log2timeline are vitally important to understand. They can be used to specify exactly which log2timeline modules you would like to parse in a given image. There are some default list-files already built into log2timeline found in the /usr/share/perl5/Log2t/input directory and all in with the value .lst. The list-files will always be used with the —f list-file option and the -r (recurse directory) and will automatically parse any artifact included in the list-file chosen found in the starting or subdirectory of the location you are examining with log2timeline.

Once you understand the .lst files are just a list of artifacts you would like to examine, it is fairly simple to add your own for any type of situation. For example, you could add one for an intrusion investigation against an IIS webserver by using only the artifacts mft, evt, and iis. This will save you a lot of time especially if there are a bunch of IIS log files on the system.

Intermediate log2timeline LIST-Files usage

If you prefer to not make a list-file for use. log2timeline can take any number of processors, as long as they are separated with a comma.

An example: -f winxp,-ntuser,syslog- This will load up all the modules in the winxp input list file, and then add the syslog module, and remove the ntuser one.

The same can be done here: -f webhist,ntuser,altiris,-chrome — This will load up all the modules inside the webhist.lst file, add ntuser and altiris, and then remove the chrome module out of the list.

This is the proper way to form a command using the log2timeline list-files option in the SIFT workstation.

Now that we have an understanding of the basic functionality, it is best if we quickly take a look at some cases in where a targeted timeline could be used.

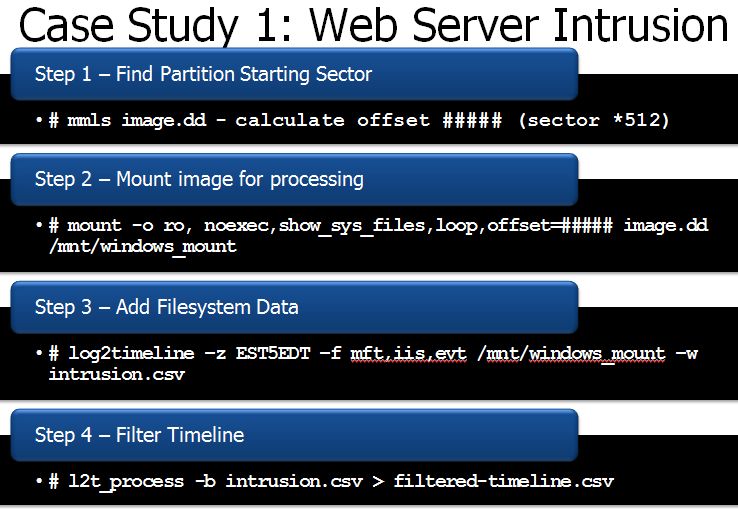

Case Study 1 — Intrusion Incident (IIS Web Server)

Perhaps you are examining a case in which you have to examine a web server for a possible residue of attack. In this case you are not sure when the attack took place but you would like to look at not only the system's MFT, you would also like to include the IIS log files and the system's event logs. This greatly reduces the amount of clutter in your timeline as you already know your attack via the web would be found in these 3 places.

Mount your disk image correctly using the SIFT workstation on /mnt/windows_mount

Now build the commands to build your initial timelin

Step 1 — Find Partition Starting Sector # mmls image.dd calculate offset ##### (sector *512)

Step 2 — Mount image for processing # mount -o ro, noexec,show_sys_files,loop,offset=##### image.dd /mnt/windows_mount

Step 3 — Add Filesystem Data # log2timeline —z EST5EDT —f mft,iis,evt /mnt/windows_mount —w intrusion.csv

Once you have run the three commands you will now have your timeline built. It is best that you now sort your timeline using l2t_process. l2t_process is most effective when you are bounding it by two dates to limit looking at all times on the system.

Step 4 — Filter Timeline # l2t_process -b intrusion.csv > filtered-timeline.csv

#l2t_process —b /cases/ EXAMPLE-DIR-YYYYMMDD-####/timeline.csv 01-16-2008..02-23-2008 > timeline-sorted.csv

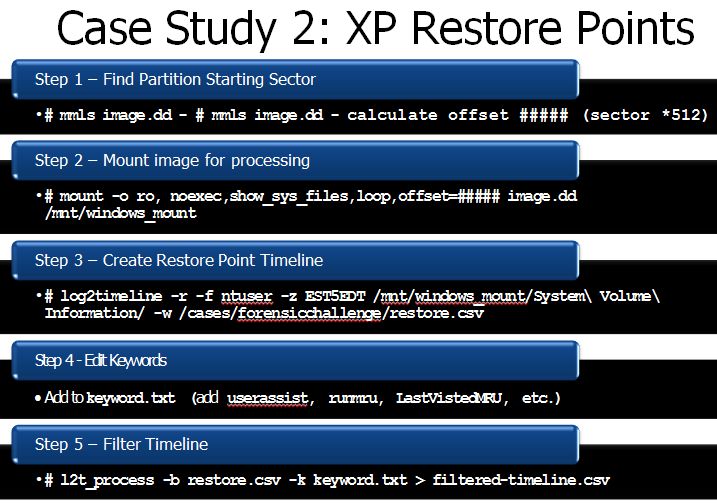

Case Study 2 — Restore Point Examination

In this example, we are using log2timeline to sort a Windows XP restore points only looking for "Evidence of Execution" only. This is used to show how you can use log2timeline to provide a targeted timeline of only a piece of the drive image instead of the entire system itself. In this example, we could see historically the last execution time for many executables on each day a restore point was created.

# log2timeline -r -f ntuser -z EST5EDT /mnt/windows_mount/System\ Volume\ Information/ -w /cases/forensicchallenge/restore.csv

# kedit keyword (add userassist, runmru, LastVistedMRU, etc.)

# l2t_process -b restore.csv -k keyword.txt > filtered-timeline.csv

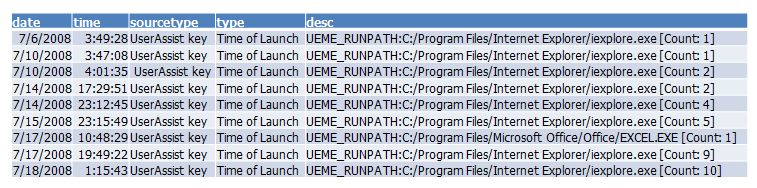

What if a key part of our case was determining when the last time internet explorer was last executed over time? It is now easily visible each time IE was last executed on a specific day using timeline analysis techniques like those I showed above. Here you can easily track the execution of a specific program across multiple days thanks to quick analysis using the restore point data (NTUSER.dat hives) and log2timeline.

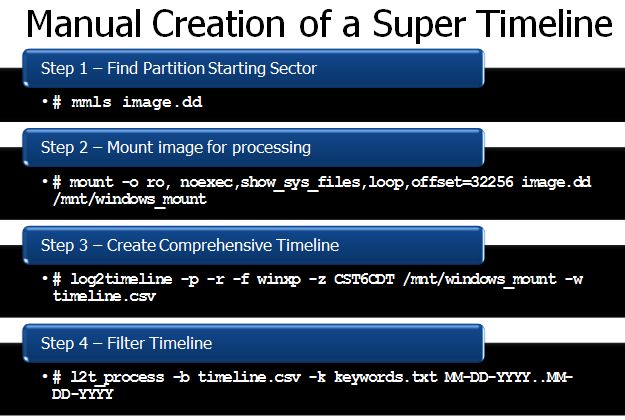

Case Study 3 — Manual Super Timeline Creation

In some cases, you might not want to use the full super timeline. To understand what log2timeline is stepping through automatically; it might be useful to accomplish the same output by hand. The following is the steps that log2timeline takes care of us in a single command instead of 3 steps.

In Summary:

Timeline analysis is hard. Understanding how to use log2timeline will help engineer better solutions to unique investigative challenges. The tool was built for maximum flexibility to account for the need for both targeted and overall super timeline creation. Create your own preprocessors for targeted timelines. Use log2timeline to only collect the data you need. Or use it to collect everything.

In the next article we will talk about more efficient ways of analyzing data collected from log2timeline

CONGRATS!

You just created your first SUPER Timeline... now you get to analyze thousands of entries! (Wha???)

In another upcming article, I will discuss how to parse and reduce the timeline efficiently so you can analyze the data easier. SUPER-TIMELINES obtain much data from your operating system, but learning how to parse it into something useable is extremely valuable. In my SANS360 talk, I will take this technique even further. Of course, we go through all these techniques in our full training courses at SANS specifically FOR508: Advanced Computer Forensic Analysis and Incident Response.

Keep Fighting Crime!

Rob Lee has over 15 years of experience in digital forensics, vulnerability discovery, intrusion detection and incident response. Rob is the lead course author and faculty fellow for the computer forensic courses at the SANS Institute and lead author for FOR408 Windows Forensics and FOR508 Advanced Computer Forensics Analysis and Incident Response.